Open Source Agentic AI: A Practical Brief (OpenClaw, Hermes Agent, and More)

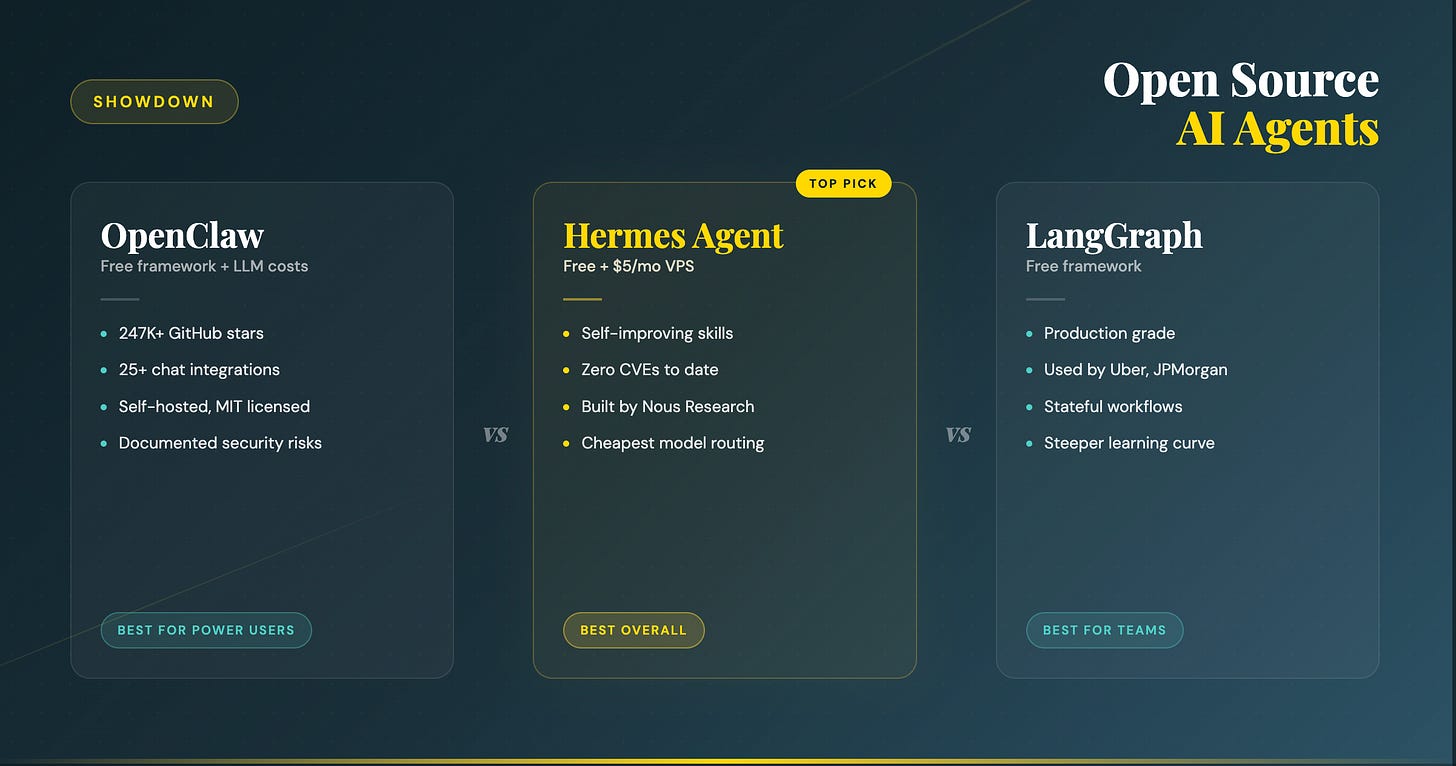

The open source agent space went from niche to mainstream in early 2026. Two projects pulled away from the pack, OpenClaw and Hermes Agent.

Underneath them sits a layer of more mature frameworks like LangGraph, CrewAI, and Microsoft AutoGen that you reach for when you want to build a custom agent rather than install a finished one.

This brief covers what each one does, what it costs, where the risks hide, the popular real-world uses, and where to track the field as it shifts week to week.

OpenClaw

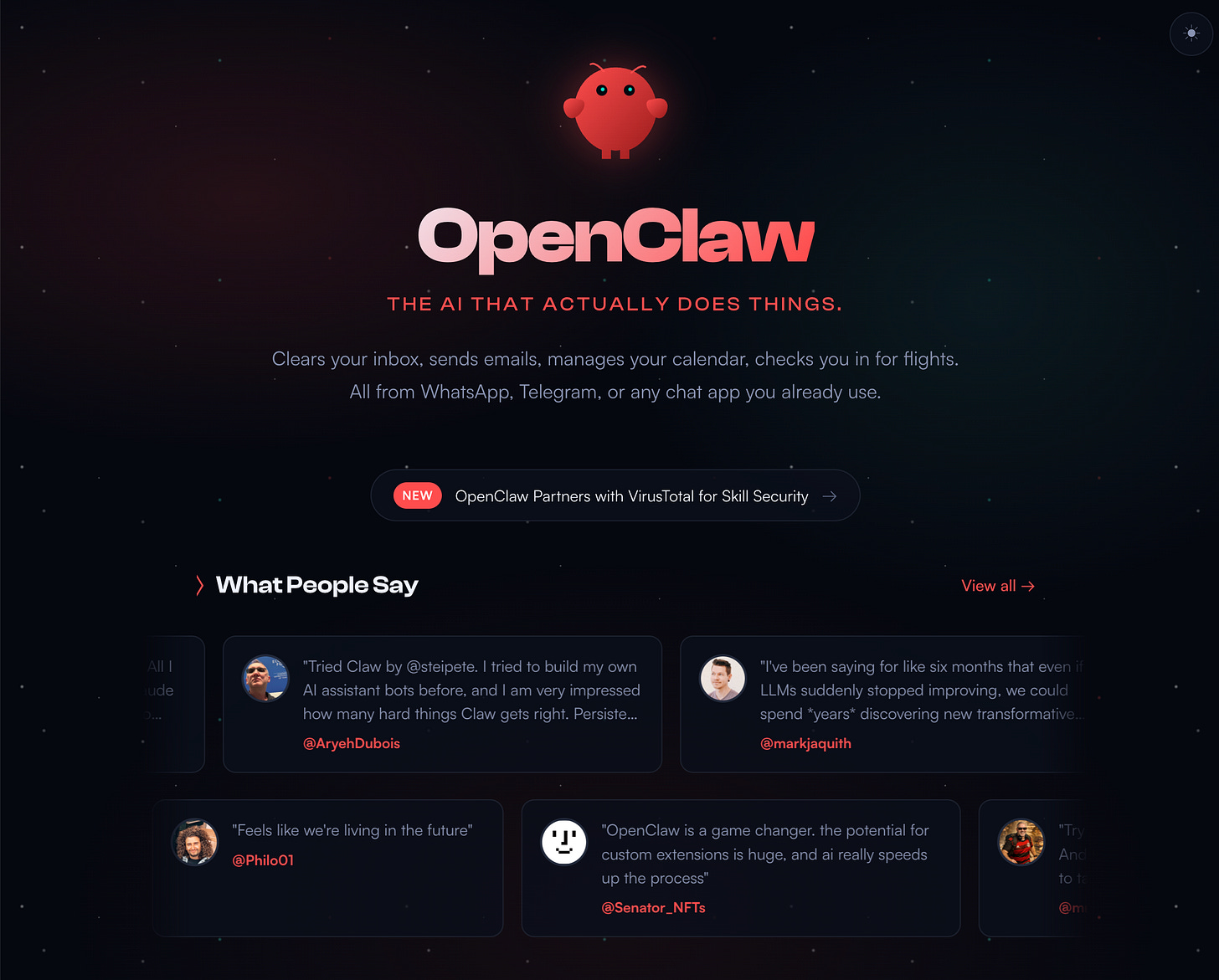

OpenClaw is a self hosted personal AI agent built by Austrian developer Peter Steinberger. It launched in November 2025, originally as Clawdbot, then Moltbot, before being renamed, and crossed 247,000 GitHub stars by March 2026. That growth outpaced Docker, Kubernetes, and React at comparable stages.

It runs as a background process on your own hardware and connects to messaging apps like WhatsApp, Telegram, Slack, Signal, Discord, and iMessage. You text it and it acts. It can read files, run shell commands, browse the web, manage your calendar, and send emails. The full source lives on GitHub.

The framework itself is free and MIT licensed. You pay for the LLM behind it (anywhere from a few dollars a month to several hundred depending on usage and model choice), and optionally a small VPS to host it ($5 to $20 a month).

It works best for solo founders and small teams who want one AI assistant they can text from anywhere without sending data to a hosted service.

Hermes Agent

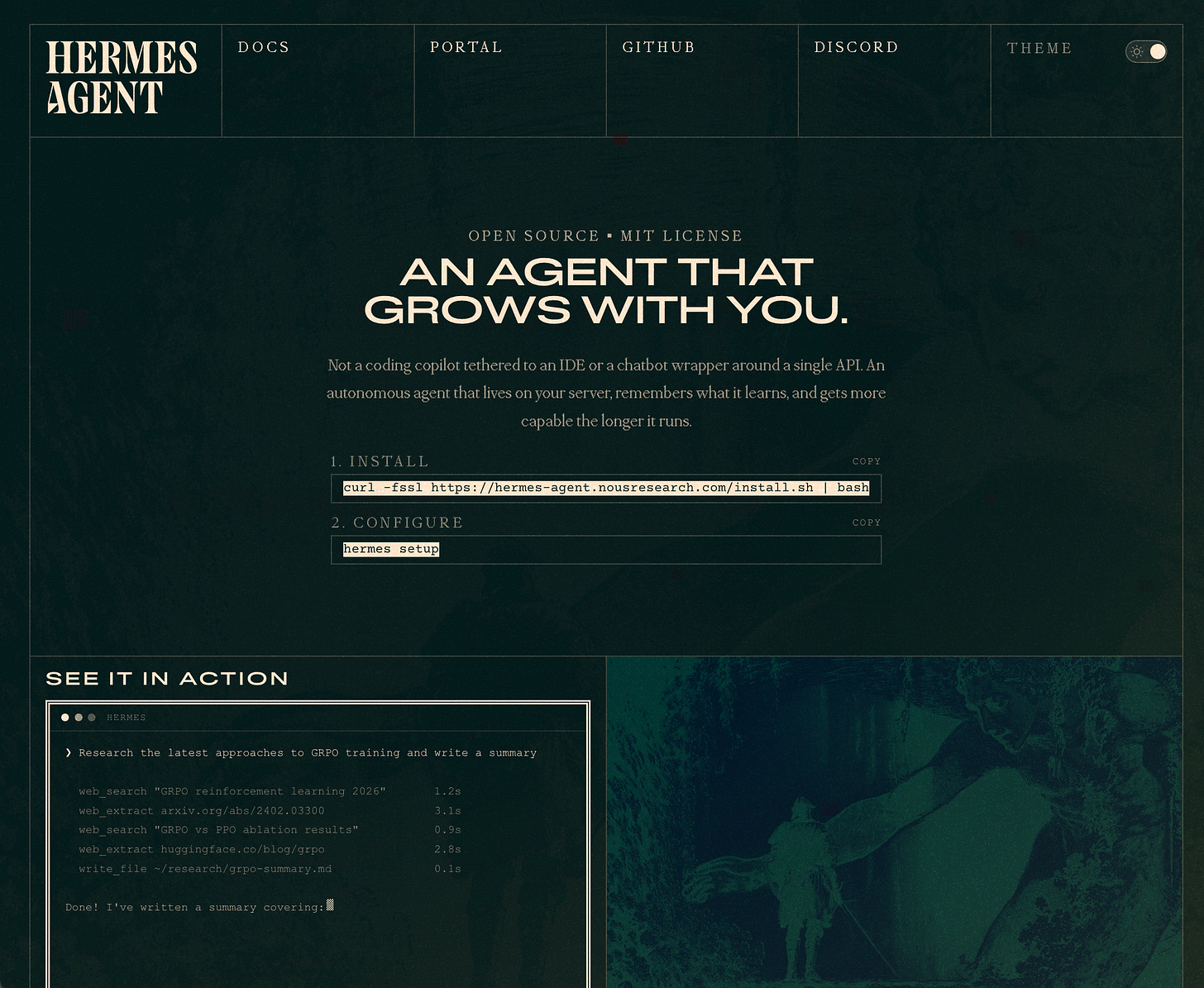

Hermes Agent comes from Nous Research, the team behind the Hermes family of open source language models. It launched on February 25, 2026, and hit 95,600 GitHub stars in seven weeks.

Its key differentiator is a built in learning loop. When the agent completes a task, it can store the steps as a reusable skill and improve its performance on similar work over time. Nous Research reports around a 40 percent speed improvement on repeat tasks once an agent accumulates 20 or more self generated skills.

Hermes is also free and MIT licensed. Running it with the Hermes 4 70B model through a third party host costs about 0.13 dollars per million input tokens and 0.40 dollars per million output tokens, placing it among the lowest cost options. Self hosting on a small European VPS starts around 5 euros per month.

Hermes is best suited for builders who expect to use an agent consistently over time and benefit from the compounding effect of stored skills.

Older Agentic Frameworks

The frameworks in this layer are not finished agents. They are toolkits you build with. That makes them a different category from projects like OpenClaw and Hermes Agent. You use them when you need control, customization, or production reliability.

LangGraph

LangGraph, built by LangChain, is widely used in production environments. Companies like Cisco, Uber, LinkedIn, BlackRock, and JPMorgan run agents on it. It has around 24,800 GitHub stars and tens of millions of monthly downloads.

It is designed for stateful, long running workflows where you need precise control over execution, branching logic, and human checkpoints. It is not a plug and play agent but a toolkit for building one.

CrewAI

CrewAI is often considered the easiest framework to learn. You define agents as roles such as researcher, writer, and editor, then assign them tasks in sequence.

It has around 45,000 GitHub stars and is well suited for rapid prototyping and content pipelines where multiple agents collaborate in a structured flow.

Microsoft AutoGen

Microsoft AutoGen, now referred to as AG2, uses a conversational model where agents interact, debate, and refine outputs through dialogue.

It is strong for coding workflows and brainstorming scenarios. Development has slowed as Microsoft shifts attention toward its broader agent framework ecosystem.

AutoGPT

AutoGPT was one of the first widely known autonomous agent projects and has over 170,000 GitHub stars.

Despite its influence, most teams now consider it too unpredictable for production use. It remains useful for experimentation but is rarely used in systems where reliability matters.

Vendor SDKs

OpenAI Agents SDK and Google ADK represent vendor specific approaches released in 2025. They integrate tightly with their respective ecosystems and are most useful if you are already committed to those platforms.

Common use cases

The easiest way to understand where these agents fit is to look at what they are actually being used for day to day. Across both breakout agents like OpenClaw and Hermes Agent and custom builds on frameworks like LangGraph or CrewAI, usage clusters into a few clear categories.

#1 Operational automation

This includes inbox triage, drafting and replying to emails, calendar management, and meeting preparation.

These are repetitive tasks with clear inputs and outputs, which makes them a natural starting point for agents that can read, write, and take simple actions.

#2 Monitoring and research

Teams use agents to run ongoing competitor scans, track vendors, and summarize changes across large amounts of information. Instead of doing one off searches, the agent runs continuously and reports back on a schedule.

#3 Technical & internal workflows

This shows up in codebases as bug triage, pull request review, and lightweight debugging. It also appears in business processes like document sorting, invoice processing, and internal knowledge retrieval.

#4 Multi step content pipelines

An agent or group of agents can handle research, draft an article, edit it, and prepare it for publishing. This is where frameworks like CrewAI are often used, since they make it easy to chain roles together.

#5 Customer support

Customer support combines all of the above capabilities, reading messages, deciding on actions, and generating responses, but does so in a user facing context where mistakes are visible and costly.

It is also one of the most risky areas. Errors in support can lead to refunds, compliance issues, or legal exposure, especially in regulated industries. That is why companies tend to be more cautious here than in internal use cases.

Klarna is a good example of how this plays out in practice. The company reported that its AI assistant handled roughly two thirds of customer inquiries, but later walked back some of those claims and brought human agents back into the loop for more complex cases. The pattern is consistent across the industry. Automation works well for simple, repetitive requests, but edge cases still require human judgment.

Agents are already useful across a wide range of tasks, but the level of autonomy you can safely give them depends on how costly a mistake would be.

Agentic AI Risks

Every agent capable of taking real actions introduces real risk.

OpenClaw Risks

OpenClaw has the most documented security concerns.

China’s CNCERT issued a warning in March 2026 citing weak default configurations and prompt injection vulnerabilities.

Prompt injection is a security vulnerability where an attacker manipulates a model into ignoring its original instructions and executing the attacker’s commands instead.

Cisco’s AI security team identified a third party skill that enabled data exfiltration without user awareness. Features like link previews in messaging platforms have also been used as attack vectors.

A core maintainer has stated that users who are not comfortable with command line tools may not be able to operate it safely.

Recommended precautions include strict API spending limits, human approval for irreversible actions, restricted network exposure, and staying on the latest patched version.

Hermes Agent Risks

Official vulnerability databases recorded the first agent specific vulnerabilities for Hermes Agent in April 2026.

Authentication Flaws: Threat tracking logged critical flaws in the core infrastructure. CVE-2026-7112 outlines an improper authentication manipulation flaw in the API server handler. You can review the full parameters on the NVD CVE-2026-7112 Database.

Webhook Vulnerabilities: Parallel to this, a remote missing authentication flaw was uncovered regarding insecure webhook flags. The official metrics are cataloged on the NVD CVE-2026-7113 Database.

General Agentic AI Risks

Prompt Injection

Across all agents, prompt injection remains the top concern. OWASP ranks it as the leading vulnerability in language model systems.

Any external data source such as web pages, emails, or documents should be treated as potentially adversarial. Hackers can disguise malicious inputs as legitimate prompts and manipulate systems into leaking sensitive data, spreading misinformation, or executing unauthorized actions.

Without human approval gates, a successful injection can trick the agent into firing API commands that delete databases or transfer funds.

Runaway Cost

Autonomous execution can rapidly drain budgets when safeguards fail. Agents often run tasks repeatedly to reach a goal.

If an agent hits a logic error or a hallucinated tool output, it can enter a rapid, uncontrolled loop that consumes thousands of dollars in API tokens in minutes.

Attackers who successfully inject an agent can force it to execute highly complex, recursive sub-tasks on purpose to intentionally drive up infrastructure and compute bills.

Standard web rate limiters fail here because the traffic is generated by the legitimate, authenticated agent itself. Hard spending caps must be set at the LLM provider level to prevent financial damage.

Data Leakage

Agents require broad read and write access to handle workflows, creating massive exposure vectors. Attackers use prompt injection to force the agent to act as a confused deputy.

The agent has legitimate access to your company emails and files, while the attacker does not. The injection tricks the agent into reading those secure files and sending them to an external server. When agents save conversation histories or write data back to a central vector database, an injection can permanently poison that stored context.

This causes the agent to leak those stolen credentials or private records to other users in future sessions. Giving an agent a broad read scope makes data leakage inevitable if its prompt is hijacked. Strong isolation and minimal privilege boundaries are the only true defenses against this exfiltration.

Incorrect Information

Agents can confidently generate and act upon false data when handling complex tasks. Without strict verification, an agent might read a fabricated fact from an external source or hallucinate a data point, leading it to make deeply flawed operational decisions.

This becomes highly dangerous when the agent has access to financial tools, email systems, or database management. Attackers can also intentionally poison external data sources to feed the agent false information and disrupt business workflows.

Model Rankings & Pricing

Understanding the landscape of AI models and provider costs is critical because building software with large language models carries direct financial and performance trade-offs.

Selecting the wrong model can lead to slow execution or rapidly ballooning bills. Because the agent and model space moves weekly, you should bookmark these highly reliable industry resources.

Artificial Analysis is the closest thing to an authoritative leaderboard. It tracks intelligence index, speed, and price across 340-plus models, with a filter for open weights only. Updated continuously.

Vellum runs both a closed leaderboard and an open weights leaderboard. Benchmark scores across reasoning, coding, math, and agentic tasks.

LLM-Stats shows open source rankings with pricing, speed, and context window in one view.

OpenRouter’s model list is the easiest place to spot-check live pricing for 300-plus models across providers.

Price Per Token does provider-vs-provider price comparisons across hosts like Together AI, Fireworks AI, and OpenRouter.

Where to find hosting and cost control info

The hard part is not picking a model. It is keeping the bill from running away while still using a model smart enough to do real work.

OpenRouter and Together AI are the two most-used unified gateways. One API key, hundreds of models, ability to switch with a parameter change. OpenRouter charges a 5.5 percent fee on credit purchases plus passthrough provider rates. Together AI runs models on its own GPUs and skips that middleman fee on its catalog.

Fireworks AI offers faster inference and 50 percent off cached inputs and batch jobs. Strong for high-volume agent workloads.

LiteLLM is a free, open source proxy you self-host. Zero markup, zero vendor lock-in, but you manage the infrastructure yourself.

Helicone and Portkey are observability layers that sit between your agent and your provider. They log every call with latency, token use, and cost. If you are not measuring per-call cost, you are flying blind.

Ollama and LM Studio let you run models entirely on your own hardware. Free at the inference level, but you pay in upfront hardware cost and electricity. Useful for sensitive data or workloads where API costs would otherwise dwarf hardware costs.

NVIDIA’s NemoClaw, released April 2026, is a reference stack for running OpenClaw fully on-premises with NVIDIA Nemotron 3 Super models on a DGX Spark. Worth knowing about if you have the hardware budget and want zero data leaving your network.

The cost-versus-intelligence trade-off

The primary challenge in modern AI deployment is the massive pricing gap between tiers. A frontier model can solve almost anything, but at $5 to $25 per million output tokens (Claude Opus 4.7 territory), an agent that loops on a hard problem can quietly bill $50 in a single evening. Conversely, cheap models cost as little as two cents per million tokens but cannot reliably plan complex, multi-step work.

The standard for 2026 is multi-model routing. Send routine tasks, such as summarization, classification, and simple lookups, to cost-effective models. Examples include DeepSeek V4 Flash at $0.17 per million tokens and Gemini Flash. Reserve expensive models like Claude Opus 4.7 or GPT-5.5 for the most complex reasoning steps. This approach has helped production teams reduce agent costs by 40% to 60% without affecting quality.

Several tactics can increase these savings:

Set spending limits at the LLM provider, not within the agent. The agent can be tricked into ignoring its own limits, but the provider’s limits are absolute.

Use prompt caching where supported. Cached input tokens typically cost 10% to 20% of standard rates. An agent with a large, stable system prompt can achieve 80% to 90% savings on repeat calls.

In OpenRouter, specify particular, inexpensive providers. Some models route to providers charging $7 per million tokens when the same model is available for less than $1 elsewhere.

Begin with the smallest model that performs the task. Only escalate to a more expensive model when the cheaper one fails.

Bottom line

If you want to try agentic AI without building anything from scratch, install Hermes Agent. Cleanest security record, free, learns over time, runs on a $5 VPS. Use Hermes 4 70B as your default and Claude Opus 4.7 as a fallback for hard tasks.

If you want maximum capability and accept more risk and setup work, OpenClaw is the move, with hard spending limits, human approval on irreversible actions, and active monitoring.

If you are building something custom for a team, choose LangGraph for production reliability and CrewAI for speed of development.

To stay up-to-date on the latest models and pricing for each, read Artificial Analysis weekly. The model rankings are shifting fast enough that today’s best choice may not be best three weeks from now.

Pricing and version data verified April 27, 2026.